AI vs. Financial Privacy: The Deepfake Extortion Era in 2026

The Collapse of Visual and Auditory Trust

Until very recently, biometric security and voice verification were considered the “gold standard” in banking and digital asset exchanges. In 2026, that barrier has effectively collapsed. The proliferation of zero-latency voice synthesis models and real-time video generators has allowed attackers to impersonate identities with terrifying precision.

We are no longer dealing with poorly written phishing emails or suspicious links; we are facing high-definition video calls from “family members,” “legal advisors,” or “account managers” requesting urgent transfers with appearances and voices indistinguishable from reality. The fundamental problem is that our brains are hardwired to trust what we see and hear, but in the current technological landscape, those senses have become easily hackable entry points.

My Critical Take: Why “Convenience” Became Our Weakest Link

In my opinion, the financial industry rushed too fast into biometric convenience without considering the exponential growth of generative AI. We traded robust security for a “face scan” to unlock our funds, prioritizing user experience over actual safety. Now, that same face scan can be reconstructed by a $20-a-month AI tool accessible to anyone with an internet connection.

The industry is scrambling to patch these holes, but as an analyst, I see a massive gap between current banking protocols and the capabilities of modern malicious AI. We are essentially using 20th-century logic to fight 21st-century synthetic threats. If you rely solely on a face ID or a voice print to protect your life savings, you are operating under a false sense of security. The “human element,” once our greatest strength, is now the primary attack vector.

Social Engineering 4.0: Synthetic Identity Hijacking

The most common attack method in 2026 is “selective voice cloning.” Attackers no longer need hours of footage; they collect small audio fragments from social media, public videos, or even intercepted voice notes to train an AI model in a matter of seconds. Once they have the model, they perform “emergency” calls to victims.

In the digital asset space, this translates into fake technical support or “compliance officers” calling from what appears to be a legitimate number. These attackers use the actual voices of known executives or company representatives to request seed phrases, secondary passwords, or the deactivation of security measures. The psychological pressure of hearing a familiar, authoritative voice makes even veteran investors bypass their own safety protocols in a moment of induced panic.

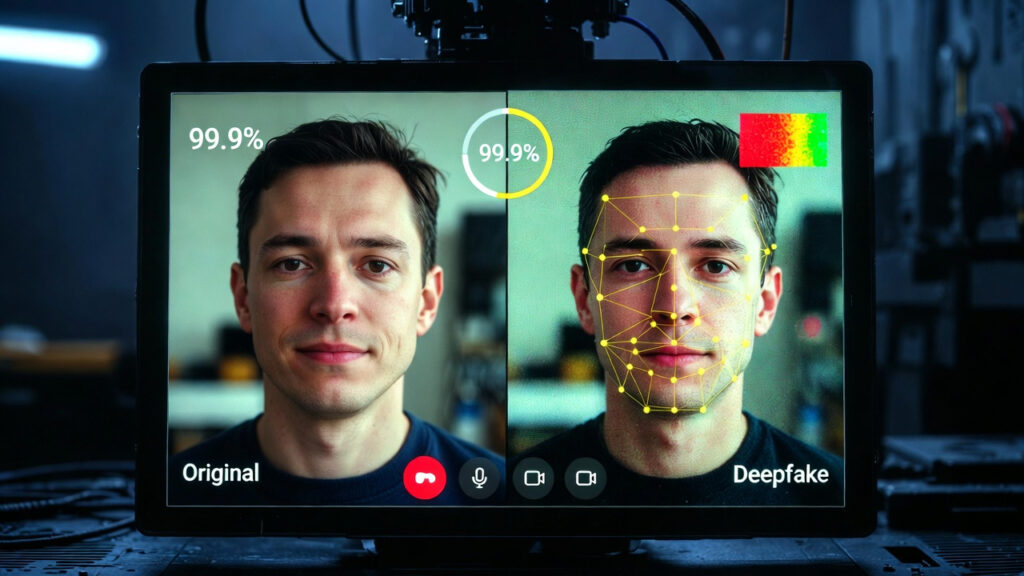

The Technical Anatomy of a 2026 Deepfake

Understanding the enemy is the first step toward defense. Modern deepfakes rely on two core technologies: Generative Adversarial Networks (GANs) and Neural Voice Cloning (NVC).

- Visual Mapping: The AI maps over 128 unique points on a human face. In 2026, these maps can be applied to a digital “mask” that moves in real-time during a Zoom or Telegram call, perfectly mimicking the victim’s micro-expressions.

- Audio Synthesis: By analyzing just a few seconds of your voice, an AI can replicate your tone, pitch, and even your unique accent or “slang.”

- Hardware Acceleration: In the past, you could detect a deepfake by its lag or the “uncanny valley” look. Today, the massive jump in GPU processing power allows these fakes to be rendered with zero delay, making them impossible to spot with the naked eye during a live interaction.

Table: Traditional Security vs. AI Threat Level (2026)

| Security Method | Vulnerability to AI | Current Risk Level | Recommended Alternative |

| Facial Recognition | Video Injection / Real-time Deepfake | High | Physical Passkeys (U2F/Yubikey) |

| Voice Printing | RVC (Retrieval-based Voice Conversion) | Critical | Analog Passwords / Safe Words |

| SMS 2FA | Signal Interception / Sim Swapping | Medium-High | App-based Authenticators (TOTP) |

| Hardware Wallets | Immune to Digital Cloning | Very Low | Air-gapped Cold Storage |

| Standard Passwords | Brute Force / AI-Guessing | High | Biometric + Physical MFA |

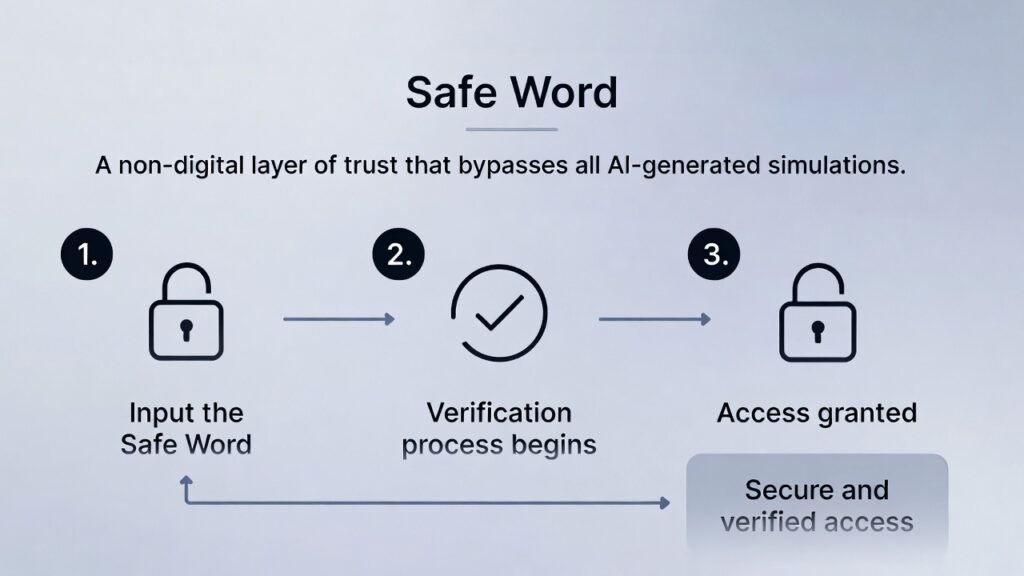

Defensive Strategies: The “Safe Word” Protocol

Since deception technology is now superior to human detection capabilities, the solution in 2026 is not technical, but operational. Establishing “Safe Words” or analog keywords is no longer a niche security tip; it is a necessity for anyone holding significant digital assets.

This method involves establishing a secret, offline phrase with family members, business partners, or close collaborators. If you receive a request for funds or sensitive information even if the voice and image seem 100% real the absence of the secret word must be immediately interpreted as an attack.

In my view, financial security in the AI era requires returning to verification methods that cannot be processed, guessed, or simulated by an algorithm. We are witnessing a return to “analog trust” in a digital-first world. This layer of friction is your best defense against the instantaneous nature of synthetic fraud. Verification must precede action, regardless of the perceived urgency of the situation.

AI’s Dual Role: The Detection Arms Race

It is important to acknowledge that the same technology facilitating fraud is being used to fight it. New-generation financial platforms are implementing “Liveness Detection” models. These systems analyze micro-movements in skin pores, blood flow patterns in the face (rPPG), or inconsistencies in blinking frequency that are invisible to the human eye but betray an AI-generated video.

However, as an analyst, I must be brutally honest: relying on “detection” is a losing battle in the long run. The generative models creating the fakes are trained specifically to bypass the models detecting them. This is why we must prioritize “zero-trust” architectures. Prevention through isolation and physical verification remains the only superior strategy that doesn’t depend on an algorithm’s ability to spot a flaw.

Privacy Audit: Reducing Your Attack Surface in 2026

The best way to avoid being a target for a deepfake is to limit the amount of biometric material available on the internet. In 2026, overexposure on social media is no longer just a privacy concern; it is a technical vulnerability. Your public data is the “training set” for your own impersonation.

- Audio Hygiene: Be mindful of where your voice is recorded. Attackers only need a few seconds of clean audio to clone your pitch and cadence perfectly. If you have old public voice notes or clips, consider removing them.

- Visual Privacy: Avoid high-resolution, front-facing videos in public profiles. These provide the high-quality “blueprints” necessary for an AI to create a flawless 3D mask of your face.

- Metadata and Connection Scrubbing: Digital footprints allow attackers to map your social circle. By knowing who you trust, an AI can craft a believable “emergency” scenario involving people you would never suspect.

The Psychological Element: “Social Hacking” via AI

We must address the fact that AI-driven fraud is 10% technology and 90% psychology. Attackers use AI to create what I call “Perfect Urgency.” They can simulate realistic background noises such as a hospital, an airport, or a police station to add emotional weight to their deepfake call.

My advice is simple: if the situation feels like an emergency that requires immediate financial movement, it is probably a scam. Legitimate financial institutions or family members will understand if you hang up and call back through a verified, secondary channel. In 2026, “Politeness” has become a security risk. Verification is a daily discipline that requires a cold, analytical mind.

Closing Thoughts for the Modern Investor

The transition to an AI-driven economy is inevitable, but so is the evolution of digital crime. We are moving toward a world where “seeing is no longer believing.” My stance remains firm: treat every digital interaction involving capital with a degree of healthy paranoia.

The tools that make our lives easier are the same ones being used by sophisticated actors to drain our wallets. If you aren’t upgrading your security protocols at the same rate you’re upgrading your portfolio, you are essentially leaving the vault door open. Security is no longer a feature you can “set and forget”; it is an active, ongoing process. In the 2026 market, your ability to doubt is just as important as your ability to invest.